Killing CORS Preflight Requests on a React SPA

Our company's recruitment platform evolved from a Rails application into a classic SPA app.

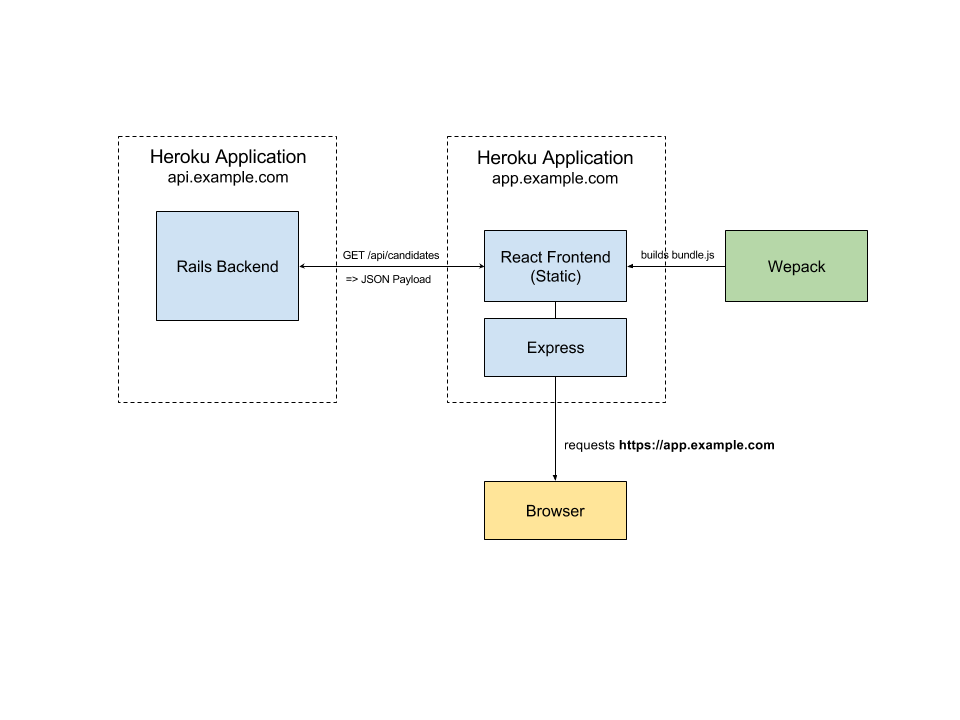

The Rails app serves as a RESTful API backend while the frontend app is built on React/Flux (with some Relay). Here's a bad visualization of how the setup looks like:

The two app setup allowed us to iterate on the client and the backend independently. Express is used on the frontend app to serve cache headers and do HTML5 routing.

When the team launched the app, we got a lot of praise from users for its speed and UI snappiness compared to the previous Rails app.

If this feels "so 2015" to you, we did start building the app in early 2015 and went down the centralized RESTful API route.

CORS, the dirty word

Whenever a teammate mentions "seems like a CORS issue", it strikes fear into the deepest reaches of my soul.

With this setup, we had to deal with making CORS requests from app.example.com to api.example.com in one of the two ways:

- Find a way to proxy requests so that there's no CORS

- API serves CORS headers for app.example.com

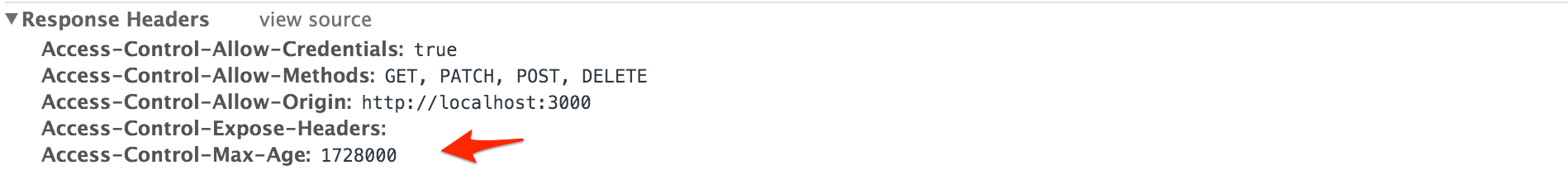

We chose 2 to reduce the complexity of dealing with forwarding and proxies. Serving headers is easy thanks to the rack-cors gem. In normal requests, the headers look like this:

Access-Control-Allow-Credentials:true

Access-Control-Allow-Methods:POST, GET

Access-Control-Allow-Origin:http://localhost:3000

Access-Control-Expose-Headers:

Access-Control-Max-Age:1728000

What are Preflight Requests

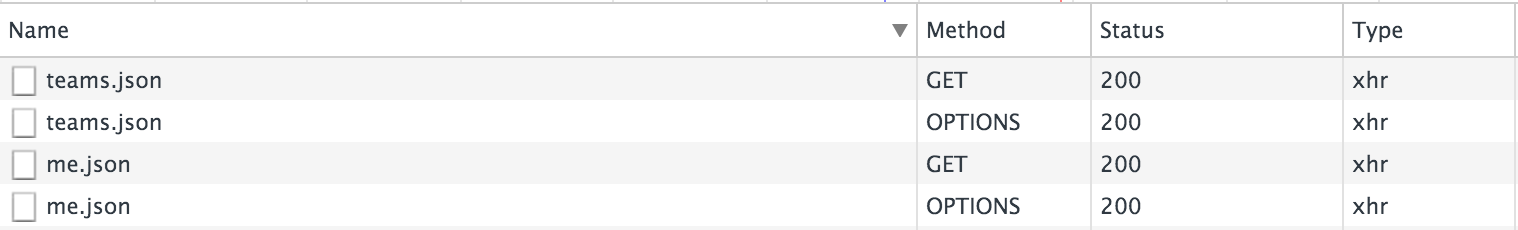

The one thing that came with CORs were preflight requests. When I first saw them appearing in the web inspector, it confused me greatly.

Preflight requests are made when requests are not "simple".

"preflighted" requests first send an HTTP request by the OPTIONS method to the resource on the other domain, in order to determine whether the actual request is safe to send. Cross-site requests are preflighted like this since they may have implications to user data. MDN

After more reading, I believe the preflight request is a "security by awareness" measure. Examples:

-

The browser makes an

OPTIONSrequest is sent to a server without any CORS awareness. This will fail and protect the server from potentially executing a forged cross-domainDELETErequest. -

The browser does not make an

OPTIONSrequest, the server with awareness can potentially not allow the request. Web frameworks don't do this because in lieu of better security measures, such as CSRF or using sessionless authentication.

A good SO answer explains this in detail.

It is also unfortunate that preflight requests are incompatible with one of its bigger use case - Sending cross site JSON POST/PATCH requests because it sets the Content-Type to application/json.

Fighting Preflight requests, the latency multiplier

This didn't really bother the team originally because the requests add little overhead and there were a myriad of higher value issues to tackle.

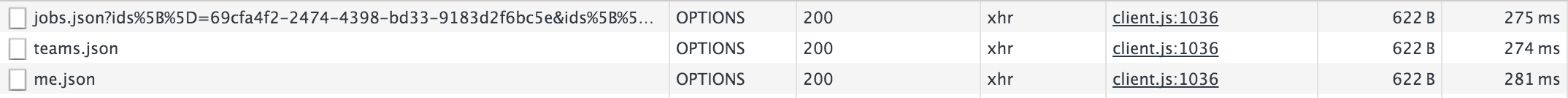

Months later, I found the app to be a little slow and looked at the console.

"Holy smokes! That's a whole lot of latency, adding almost a second to the data loading for no real reason."

Why didn't I notice this before? => I am in between relocation and is currently working out of Singapore, which has a geographical latency to Heroku's Virginia data centers. This means that users in our Hong Kong and London offices are probably facing significant amount of latency, a big #fail for me.

This is a good lesson, any extra request can result in latencies being multiplied, since each CORs request makes a preflight check, it becomes a O(n) latency multiplier. Well, time to really figure this out.

Cache requests, the low hanging fruit

Preflight requests can be cached by the browser if we remember to serve the Access-Control-Max-Age header. Make sure this is included in your response headers.

But... Most browsers don't allow you to cache the OPTIONS request for this long. For example, Webkit allows a maximum of 600 seconds.

static const auto maxPreflightCacheTimeout = std::chrono::seconds(600);

This makes caching much less useful because the cache will be cold most of the time. Boo.

Simple Requests, the holy grail

After reading a great blog post and MDN's CORS docs I realized there are circumstances where the browser does not make a preflight request, if conditions are met:

- Only uses

GET,POST,HEADmethods - Doesn't set any manual headers other than those set by the browser and these headers:

- Accept

- Accept-Language

- Content-Language

- Content-Type

- For Content-Type, the only allowed values:

- application/x-www-form-urlencoded

- multipart/form-data

- text/plain

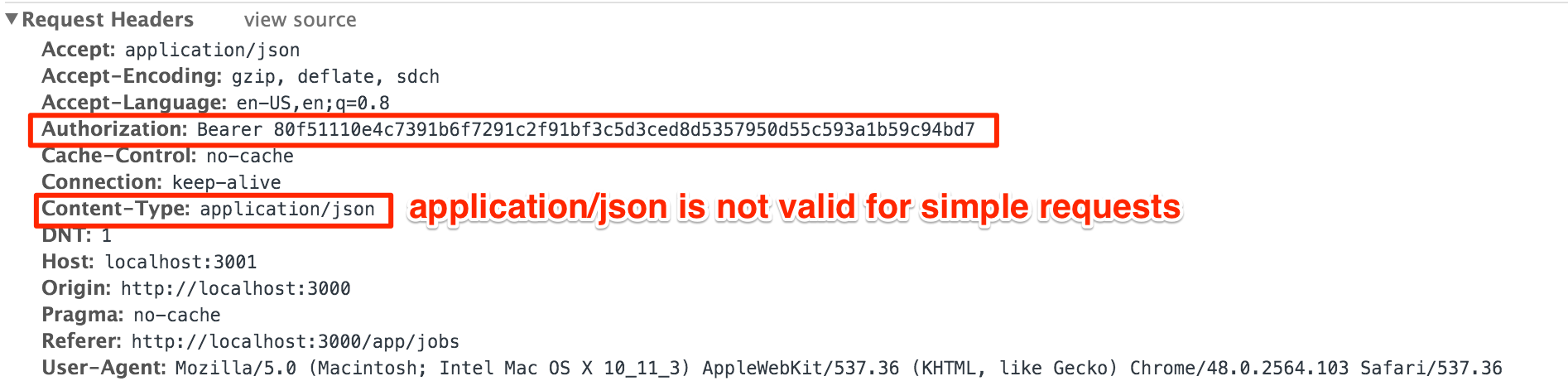

Wow, my first thought was "headers are messy and this is so restrictive". As a lazy developer, I've shied away from dealing with headers, relying on abstractions provided by libraries and frameworks. In reality, it was easier than I imagined. Given a request header that our app's GET api call generates:

We can see that the request is not simple because of the custom Authorization header and a Content-Type that set to an unacceptable value.

We don't need Content-Type for GETs

I took a dive into the api wrapper over Superagent we made to issue the GET requests:

var headers = function() {

return ({

"Authorization": `Bearer ${auth.accessToken()}`,

});

};

get(path, query = {}) {

logoActions.loadingRequest();

return new Promise((resolve, reject) => {

request

.get(apiPath(path))

.query(query)

.set(headers())

.type("json") // DOH. Why we do this????

.accept("json")

.end(function(error, response) { successfulDataLoad(error, response, resolve, reject); });

});

}

GET requests don't have a body and hence does not need to specify a Content-Type. Removing the .type() call removed the header.

Moving Authorization to the query string

The app uses sessionless authentication powered by Doorkeeper gem and the Authorization header specifies the secret used to identify the user after logging in.

In order to remove the header, we have to move the token to the query string. What did you just say????

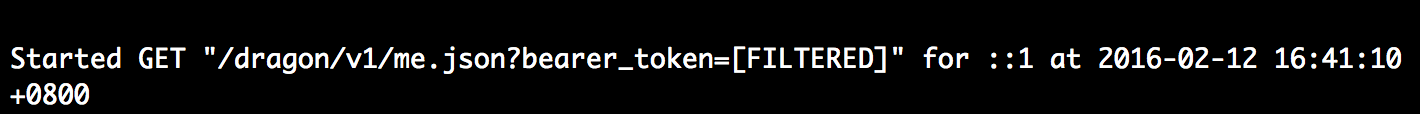

In terms of security, all API calls should be using https and there is little difference in putting the token in headers or as part of the query string. Do beware that the token should be filtered in the logs. In our setup, we used what doorkeeper expects, bearer_token and this gets filtered out by Rails when logging requests (guessing it's doorkeeper's handy work).

We also have to note that the GET urls are now replayable, since the authentication information is contained in it. Most normal users won't inspect the console and copy urls to paste around, but a good caveat emptor for developers.

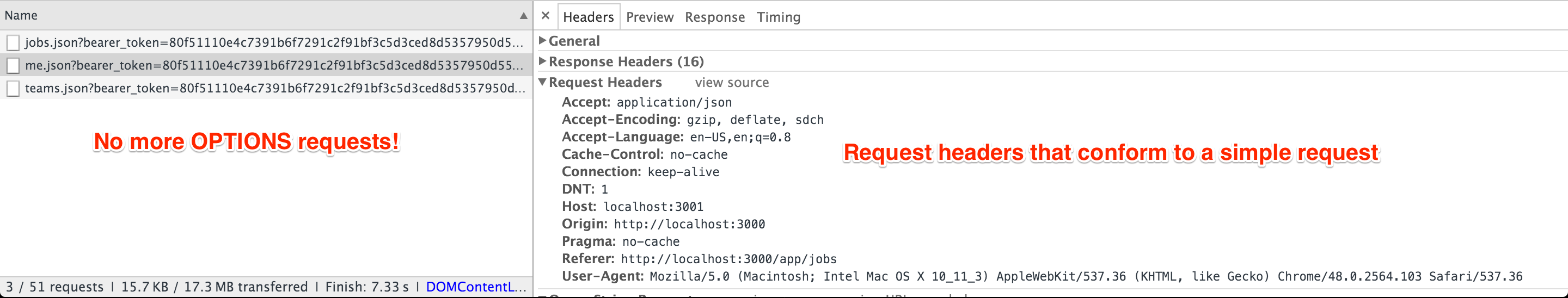

Taking profit on GET requests

Phew, we got through all that, now the request headers are simple and no more OPTIONS requests are made.

If you have a typical web app that's GET heavy, this should already be a significant win and we can stop here.

But we're using Relay...

Relay uses POST requests to send queries to our GraphQL endpoint. I believe Relay chose POST because the queries are long for GET requests, seeing that a typical query on our page url serialized is around 1900 characters and the url's limit is around 2083 characters.

Taking it to the extreme - Don't do this at home

In order to make Relay's request simple, we have to set the default application/json content-type in the POST request to something else. Two possible strategies:

1st Attempt - Send JSON but set headers to text/plain

Since we know the API request to /graph from Relay is always in JSOn, hence we can tell Relay to set the headers to text/plain and qualifying as a simple request.

Next,we instruct the backend to change the Content-Type to application/json before reaching Rails' params parsing layer, the greatest scam is done :)

This deception was a big hack to implement because it goes against the behaviors of both libraries. For example, Relay uses fetch under the hood and it wants to set the Content-Type conveniently for you based on the data.

2nd Attempt - Send data as formData instead, qualifying as a simple request

Relay's default network layer has this code to send queries using fetch.

Pseudo coding it:

return fetch(this._uri, {

...this._init,

body: JSON.stringify({

query: request.getQueryString(),

variables: request.getVariables(),

}),

headers: {

...this._init.headers,

'Accept': '*/*',

'Content-Type': 'text/plain',

},

method: 'POST',

});

We could alter it to produce formData instead:

defaultNetworkLayer._sendQuery = function(request) {

var formData = new FormData();

formData.append("query", request.getQueryString()); // Always a string anyway

formData.append("variables", JSON.stringify(request.getVariables())); // A JS object, JSON it.

return fetch(this._uri, {

...this._init,

body: formData,

headers: {

...this._init.headers,

'Accept': '*/*',

},

method: 'POST'

});

};

This causes fetch to use form/multipart that qualifies as a simple request.

The params query was a string, so it's the same as before on Rails. However, variables is now stringified JSON and manual parsing it back to JSON is required on the Rails backend.

def show

variables = params[:variables] # old code when using JSON

variables = JSON.parse(params[:variables]) # new code after changing fetch to formData

execution = Dragon::Schema.execute(params[:query],

variables: variables,

context: {

user: current_user

}

)

render json: execution

end

This works and Relay's POST request does not do preflight anymore. I'm not sure if the cost is worth it and did not ship this to production.

Conclusion

IF you have CORS preflight requests and latency significant users:

- Use the preflight cache

- Make sure GET requests are simple

For my own app, fixing the GET requests was a significant win though we still have latency with the Relay features (10% of the app currently).

After going down the rabbit hole, I think it's easier and straightforward to fight CORS with reverse proxy such as using nginx to forward requests to your API server. i.e. app.example.com/api proxies requests to api.example.com. We're hosted on heroku which makes it less trivial to do this, but will explore this as Relay / GraphQL takes over more of the data loading from RESTful endpoints.

Thanks for reading this! I hope it helped, even if the conclusion is "preflight requests are too troublesome, I'm going proxy" :)